Diaphragms

Hyperarchitectures of obfuscation

I've been thinking about ghosts, lately. Not the spooky beedsheet people, more like the concept.

Like a sprawling, endless spreadsheet, being typed out row-by-row by the invisible hands of automated systems.

Like a bomb whistling in Gaza's night, blindly falling towards a Schrödinger's target.

Like me running out of the metro the other day, late, catching a glimpse of someone I didn't expect to see.

At least I think I saw them. I'm not positive now, but I'm quite confident I was in the moment. Probably. Which is weird, because I didn't think about this person for a long time and they aren't supposed to be in this part of the country anyway.

Point is that a tiny stimulus, probably less than a second long, has dominated my mind for the last week. And that, you can call a ghost.

F.1, is - was? - a friend of a friend from another time in life. The type of person that you run into at a party every three or four months for a while, exchanging superficial updates on studies, relationships, projects and readings in different kitchens holding paper cups of the same sticky supermarket red, until you're sharing a bummed cigarette and you get sidelined by the awareness that oh fuck, this person actually knows me.

At one point, however, they kind of disappeared from the periphery of my social circle. Suddenly. Our common friends explained it as them becoming ‘annoying’, increasingly vocal about their animal product consumption or scolding them for buying new phones: didn't they know about the rare earths in them, how some parts of the world far away are being destroyed just to mine them? Don't they know how many thousand of liters of water it takes to refine a kilo of lithium?

What looked like a newfound political awareness rapidly morphed into a clearly more structural unwellness. Their diet shrunk violently, cause even if you go vegan it's surprisingly hard to find something that's really, actually cruelty free. They threw out a good chunk of their sweatshop-made clothing, reeking of inhumane workdays for shit pay and the odd accidental amputation due to non-existent worker safety. After a short while they began not going out much, as the endless expanse of concrete and asphalt of the city was enough to evoke harrowing ghost images of quarries and desertification.

The last news I got is that they mostly spent their time online, obsessively checking stats by NGOs and research bodies and trying not to think about the carbon footprint of data centers.

Opacization

The story of F.'s peculiar malaise haunted me for a long time.

Not only because it was sad, but because it was, fundamentally, correct.

Most everything in our way of life does involve resource extraction and exploitation. Most of it does involve levels of ugliness that none of us would feel ready to enforce in first person. Let's face it, would you have the guts to butcher a single chicken? Like, kill it dead for real? I wouldn't.

From a purely analytical perspective, F. was correct: it's horror all the way down. Basically any consumer product or service has some steps in their production history that if looked into closely are questionable. As in, the kind of fucked up we'd do anything not to have happen to our family or our city.

But the human brain is mercifully myopic. No one2 likes to do the violence, but we all like the little treats we get in return, and at the end of the day we're basic animals: wired to be more treat-motivated than we are violence-averse. So, those who can, delegate it. Those who can't delegate, subdivide the process so that the horrible bits are so chopped up that you can't recognize them unless you squint.

We built, brick by brick, a whole megastructure of delegation, the one true operating system of the contemporary world. A most complex architecture of obfuscation of our unsavoury, animal hardwiring.

In the oracular, imperfect and beautiful Hyperobjects Timothy Morton postulates that one of the core characteristics of a hyperobject is that they can't be seen. Which doesn't mean they are invisible, but that their scale is such that we can't perceive them all at once: we can glance at a single appendage, but never take in the whole thing.

I don't know what switch flipped in F.'s brain, but the more I think about it, the more it feels like it has to do with a sudden and dramatic loss of their natural cognitive myopia, with every diaphragm between them and the violence becoming explicit and transparent.

And, I mean, who needs cosmic horror when you have that eventuality.

Lavender nightmares

Much consternation is being rightfully awoken these days about Lavender, the automatic target designation system used by the IDF to create kill lists for airstrikes in Gaza. Most of the attention is justifiably on the system's astronomical error rate (around 10%) and the inaccuracy of some of its components, which resulted in false positives that triggered strikes when the target wasn't home, killing dozens of innocent civilians (generally women and children) for no tactical reason. Not that there is one that would justify something like that, of course.

The ins and outs of the system's application are covered this thorough investigation by +972 magazine and Local Call, penned by Yuval Abraham. If you have the time, I urge you to read it all. If you don't have the time, there's probably something less important that you can drop to find the 15 minutes it's gonna take.

If you opened the article and looked at the embedded photos (late trigger warning, I guess), you might find yourself asking a question. Which is, who is responsible?

Someone would suggest that military operations are already highly bureaucratized to minimize the feeling of responsibility for the violence of anyone involved, but this is different.

In this instance, the core process of deciding who lives and who dies has been hollowed out, distributed across an innumerable amount of individuals and organizations across time and space. Those who ideated the system. Those who coded it. Those who trained it and those who data-gathered. Those who fiddled with the parameters in the spreadsheet to generate longer or shorter lists. Those who rubberstamped them. Those who flew the planes, and the list could probably go on for a while.

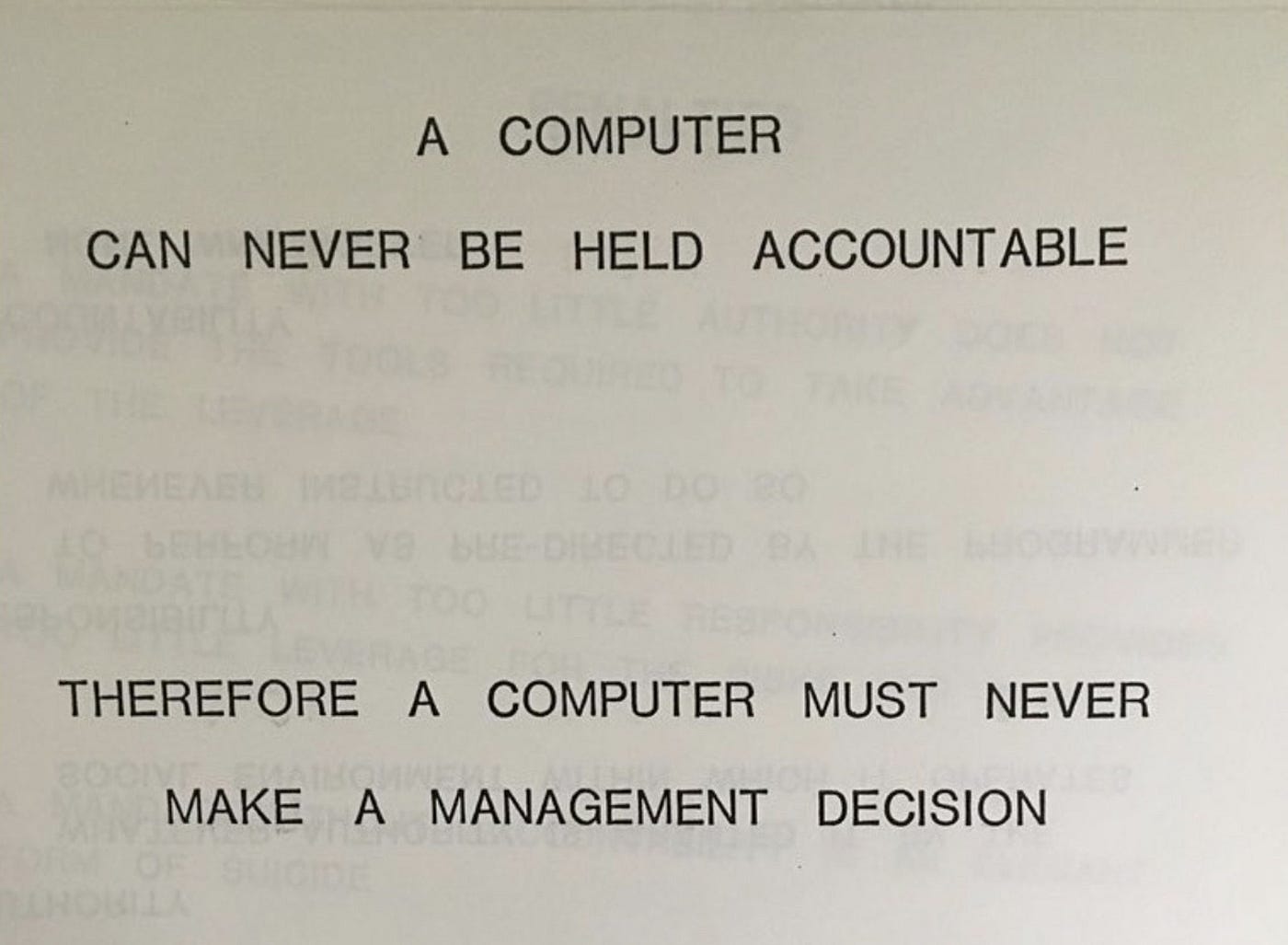

Crucially, all of this would remain true even if the system reached 1000% accuracy. It's not, as the kids say, a skill issue: it's inherent in the design. And this, to me, is a mask off moment about AI in general.

Much of the current AI hype is just hype. It's gonna deflate in its right time, when a clear view of what the technology actually can or can't do stabilizes3 and it just becomes another tool in the great economic machinery. The miracle talkers, the wundertech freaks and the gurus will wash away, many of them wealthier, to move on to the next thing they can convince some business development exec will make them millions. What is likely not to get washed away is yet another layer of cellophane in the obfuscating machinery.

Maybe AIs of the future will be able to understand the data they are processing4, maybe they won't. Maybe they will steal our jobs, maybe their outputs will plateau at the current mediocre level.

The one thing I'm pretty sure they will be is: massively used as diaphragms. Machines whose one actual job is absolving someone from responsibilities and, maybe more importantly, absolving them from feeling responsible. I didn’t do it, the robot did.

What do we do with all of this? You can't even look at hyperobjects, let alone fight them. Sure as hell catching whatever got F. doesn’t sound like a desirable, or useful, option.

So the answer is: we do the usual.

Call out the bullshit, stave off the horror, do our ever insufficient best.

the following account will be heavily edited, for an array of reasons. Fundamental truths and expressive licenses have been spun so closely together that there's no point in trying to parse them, don’t, thanks.

meaning, normal people

and it will. It always does.

thus becoming actual Artificial Intelligences, and not just machine learning models